Multi-Touch Attribution vs. Last-Click: What Actually Changes When You Switch

Your attribution model is probably costing you money. Not because the data is wrong, but because the model answers a question that doesn't matter as much as you think.

Most marketing teams treat the switch from last-click to multi-touch attribution as an upgrade. And it is the same way a wider rearview mirror is an upgrade. You see more. But you're still only looking backward. Multi-touch attribution redistributes credit across touchpoints more fairly than last-click ever did. What it can't tell you is whether any of those touchpoints actually caused the conversion.

That gap between tracking who touched the ball and knowing who scored is where real money gets wasted. Here's what concretely changes when you switch models, what multi-touch attribution still gets wrong, and where serious measurement actually starts.

What last-click attribution is and why it stuck around so long

Last-click attribution gives 100% of the conversion credit to the final touchpoint before someone buys. A prospect reads three blog posts, opens two emails, clicks a retargeting ad, then searches your brand name on Google and converts. The branded search click gets all the credit. Everything else gets zero. Every touchpoint that built awareness and consideration, invisible.

This seems obviously wrong. It is obviously wrong. But last-click had three things going for it.

It was dead simple to implement. Early web analytics tools tracked session referral sources. Crediting the last one required zero modeling. Google Analytics shipped it as the default. Ad platforms reported on it natively. A junior analyst could pull the report in five minutes.

It was dead simple to explain. "Paid search drove 400 conversions last month" is a sentence that survives a meeting with a CMO. No one needs to explain Shapley values or Markov chains.

And honestly, it worked well enough in simple cases. Short sales cycles, single-channel businesses, direct-response campaigns where most of your customer journey is one or two touchpoints, the question of credit distribution barely matters.

Where last-click fails is everything beyond those simple cases. It systematically ignores everything that creates demand and over-credits everything that captures it. The attribution funnel looks like the bottom is doing all the work, because the model can only see the bottom.

The industry has recognized this. In 2023, Google retired four rule-based attribution models from GA4 and Google Ads: first-click, linear, time-decay, and position-based. Data-driven attribution became the default. The reason Google gave was revealing: fewer than 3% of conversions used those models. Nobody was choosing them. The era of manually selecting a fixed-rule model from a dropdown is over.

What is multi-touch attribution and how does it work

Multi-touch attribution distributes conversion credit across multiple touchpoints in the customer journey instead of assigning it all to one. The premise: if five touchpoints contributed to a sale, each should get some share of the credit.

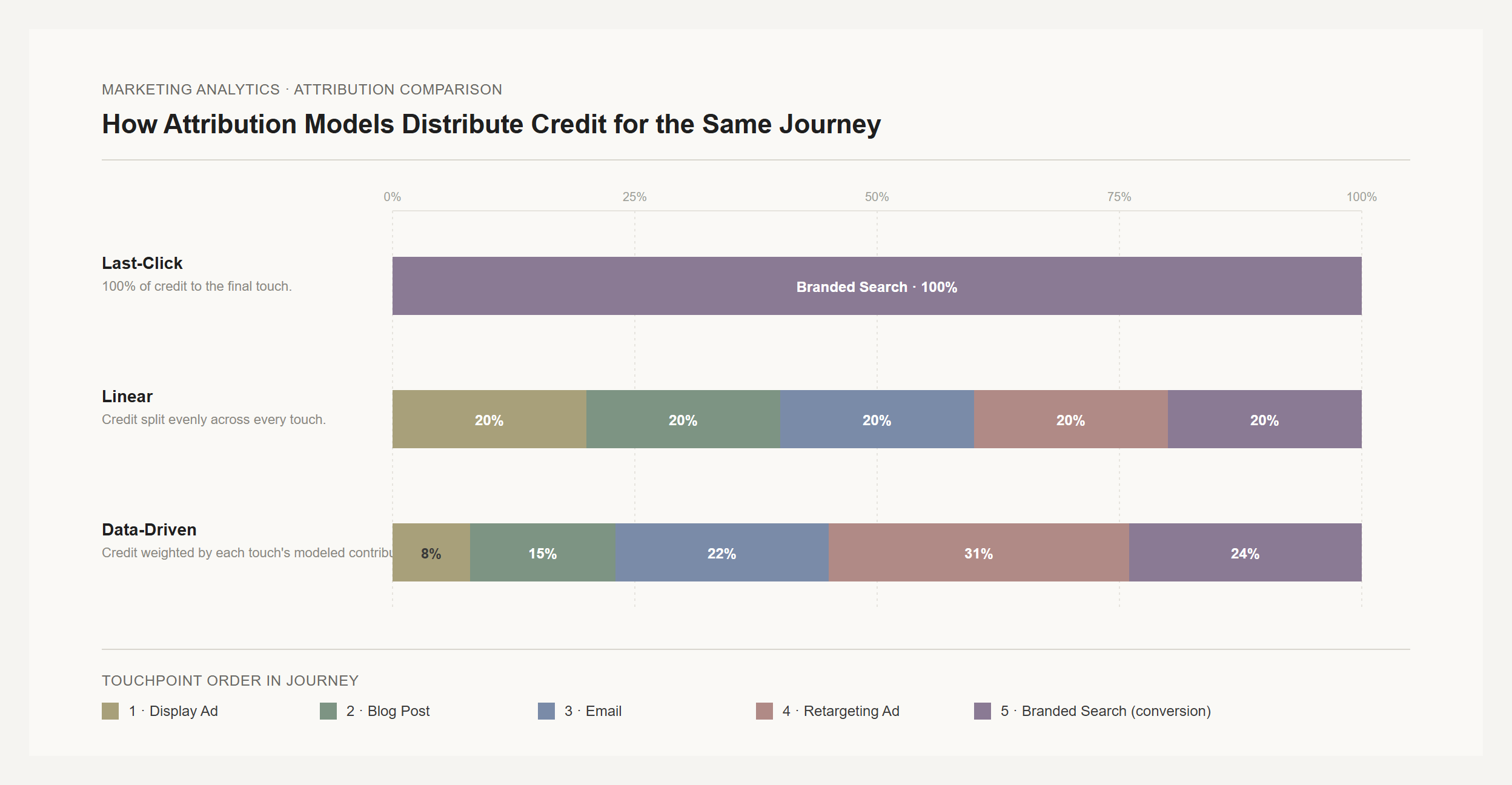

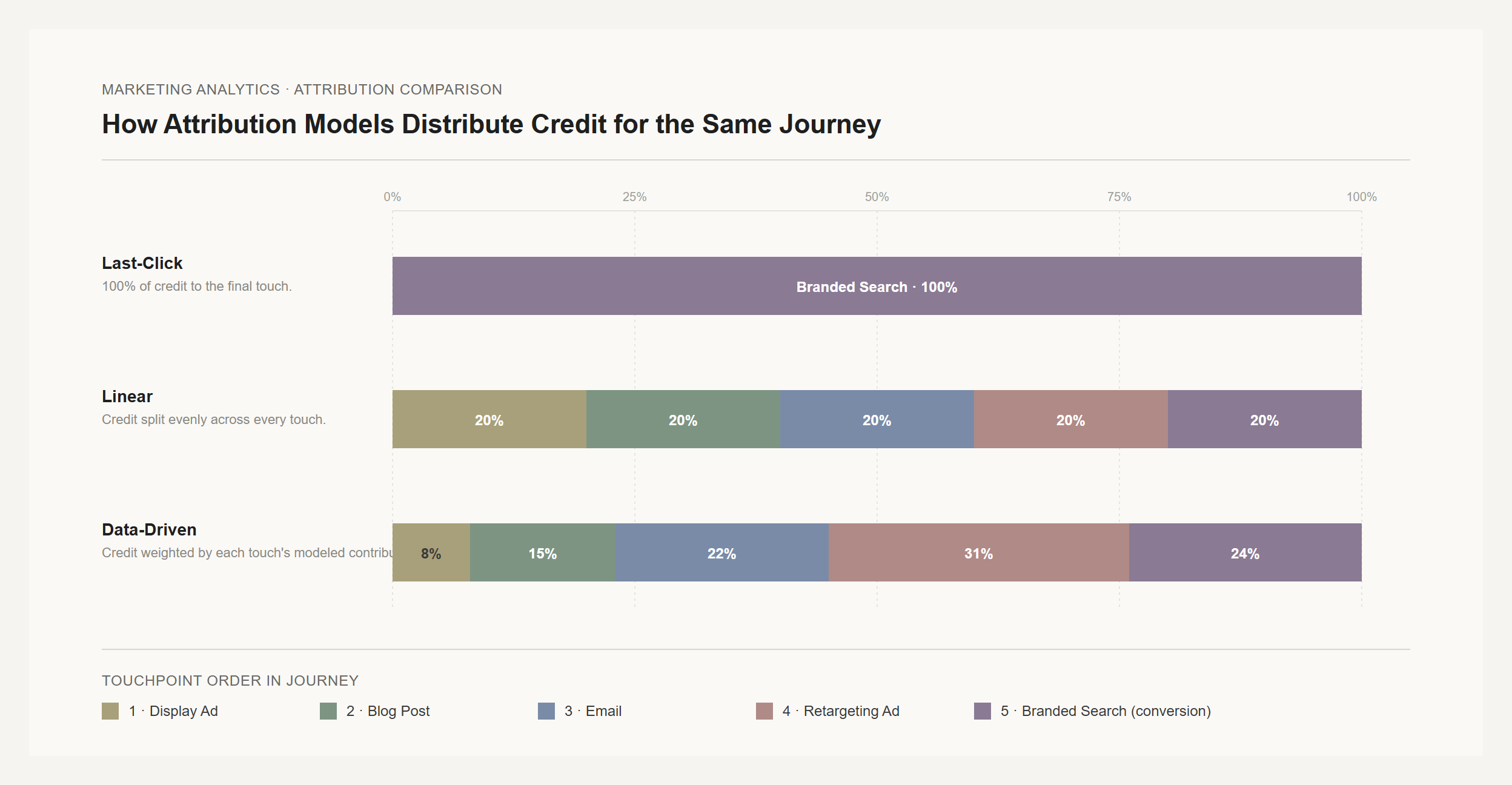

How that credit gets distributed depends on which multi-touch attribution model you use, and the differences between models are not small.

Rule-based models apply fixed formulas. Linear attribution splits credit equally, so five touchpoints each get 20%. Time-decay gives progressively more credit to touchpoints closer to conversion. Position-based (U-shaped) gives 40% to the first touch, 40% to the last, and splits the remaining 20% across everything in the middle. There's also W-shaped, which adds a 30% weight to a middle "opportunity creation" touch.

These are better than last-click. At least they acknowledge that a customer journey has a beginning and a middle, not just an end. But the formulas are arbitrary. There's no empirical basis for first and last touches each deserving exactly 40%. It's an assumption dressed up as a model. [from sources/articles/measured-dangers-of-mta.md]

Data-driven attribution is fundamentally different. Google's DDA (now the GA4 default) uses Shapley values, borrowed from cooperative game theory. The concept: evaluate each touchpoint's contribution by comparing conversion probability when it's present versus when it's absent across all possible combinations of touchpoints.

Here's what that means concretely. Say your data shows that customer journeys including display ads convert at 3%, and the same journeys without display convert at 2%. That 50% lift in conversion probability is Display's Shapley value, its measured counterfactual contribution. The model runs this calculation for every touchpoint, accounting for sequence (display before search gets different credit than search before display). The result is fractional attribution: credit split into decimal shares based on your actual data, not a formula someone picked.

There's a catch. Google's DDA requires minimum data thresholds: roughly 300 conversions and 3,000 ad interactions over 30 days. Below that, the model doesn't have enough signal and results get unreliable. Most small advertisers won't hit that bar for every conversion type.

The benefits of multi-touch attribution: what concretely changes

The shift from last-click to multi-touch isn't theoretical. It changes what you see in your data, and what you see changes how you spend.

The typical pattern: paid search looks less dominant. Upper-funnel channels that never generated a last click (display, content marketing, video, social) start getting credit for the first time. The entire conversion path becomes visible instead of just the final step.

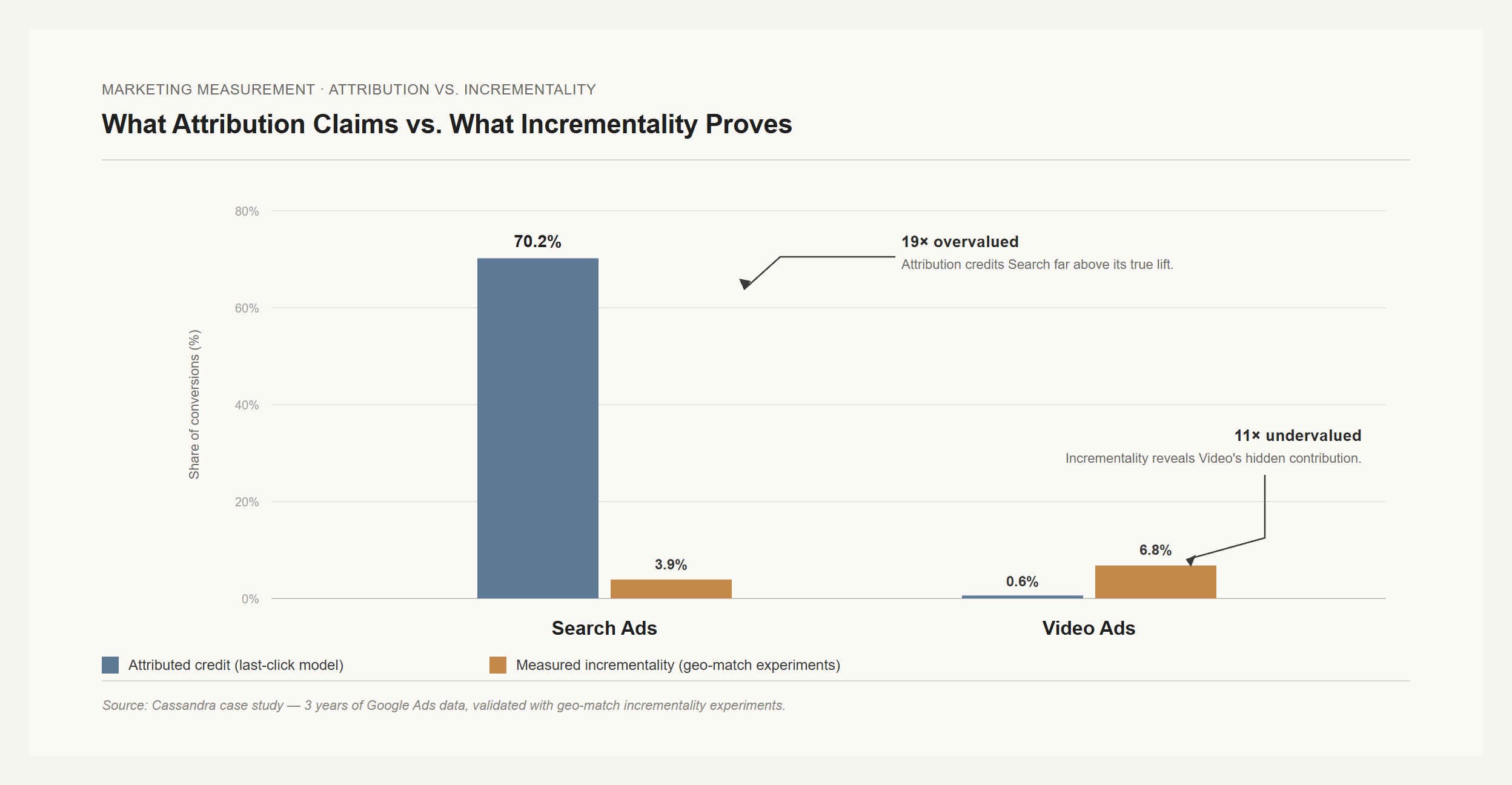

How dramatic are these shifts? The most striking evidence comes from a case study that compared attribution data against Marketing Mix Modeling and incrementality testing for a real brand across three years of Google Ads data. The divergence wasn't subtle: [from sources/articles/cassandra-attribution-misleads-budget-decisions.md]

| Channel | Attribution credit | Measured incrementality | How far off |

|---|---|---|---|

| Search Ads | 70.2% of conversions | 3.9% incremental | 19x overvalued |

| Video Ads | 0.6% of conversions | 6.8% incremental | 11x undervalued |

Search attribution claimed 70% of results. Incrementality measurement said closer to 4%. Video, which attribution nearly ignored, was contributing almost twice as much real incremental value.

It gets worse. When the researchers summed platform-attributed conversions across channels, the total was 162,112 against 121,392 actual CRM sales. That's 133% of real conversions. The platforms collectively claimed credit for a third more sales than actually existed. Every ad platform counted its own touchpoints without deduplicating against reality.

When the brand acted on the incrementality data and shifted 25% of search budget to video, they saw 18% more conversions from the same total spend within 30 days. Not a model projection. A real test with real revenue. [from sources/articles/cassandra-attribution-misleads-budget-decisions.md]

Attribution would have told them to keep pouring money into search. The measurement that actually tested causation told them the opposite.

Five problems multi-touch attribution still doesn't solve

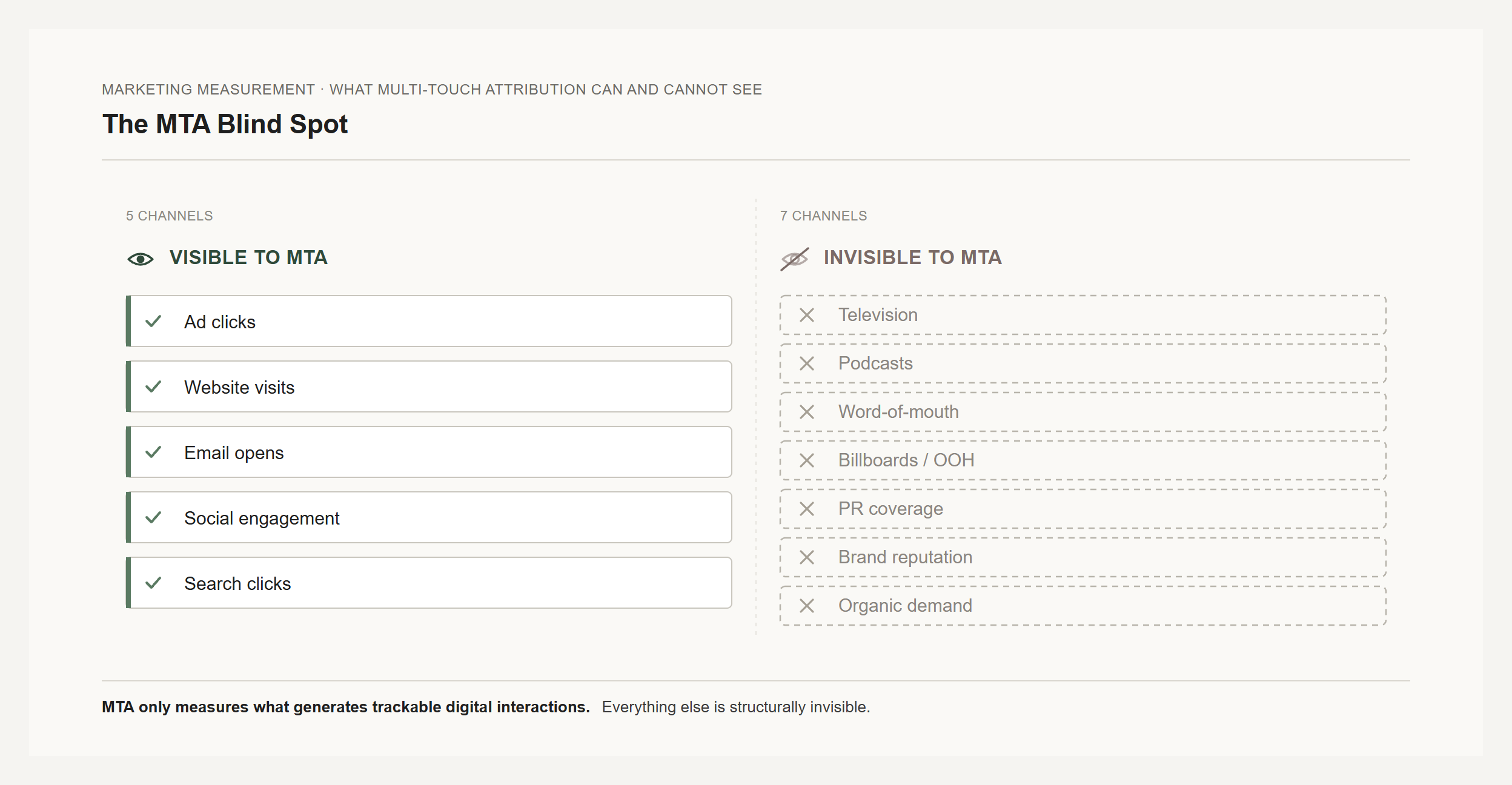

MTA is an upgrade from last-click. But calling it "better" risks missing that it inherits a deeper flaw no amount of model sophistication can fix: every attribution model tracks correlation, not causation. Knowing which channels a converter touched doesn't tell you which channels caused the conversion.

1. The correlation trap. A customer clicks a branded search ad and buys. Attribution gives search the credit. But that person typed your brand name into Google. They were coming regardless. The click preceded the purchase but didn't cause it. This isn't an edge case. Incrementality research consistently shows that 60–80% of branded search conversions are non-incremental, meaning the purchase would have happened without the ad. For retargeting, the figure is 40–70% non-incremental. MTA distributes this phantom credit more evenly across touchpoints, but the credit itself is still phantom. [from sources/articles/measured-incrementality-attribution-mmm-decision-tree.md]

2. Blind to anything that doesn't click. MTA tracks ad clicks, site visits, email opens. Addressable, digital, clickable touchpoints. Television, podcasts, word-of-mouth, billboards, PR, organic brand awareness? Invisible. This doesn't just create a gap. It creates a systematic bias. Digital channels look disproportionately important because they're the only ones in the dataset. Remember the Cassandra study: video advertising, which builds awareness through views rather than clicks, was credited with 0.6% by attribution versus 6.8% by incrementality. Attribution was structurally incapable of seeing video's contribution because video doesn't generate the clicks MTA tracks. [from sources/articles/measured-dangers-of-mta.md]

3. The lower-funnel death spiral. This is MTA's most dangerous failure mode. Because MTA over-credits lower-funnel clickable touchpoints (branded search, retargeting, shopping ads), teams optimize toward them. Budget flows down-funnel. Upper-funnel investment gets cut because it "doesn't perform." Short-term, conversions hold steady. Brand momentum and organic demand keep filling the funnel even as you defund the campaigns that generate future demand. Then the brand erodes. The funnel thins. The lower-funnel campaigns that "performed so well" capture less and less. By the time the data shows the problem, the damage has compounded for quarters. [from sources/articles/measured-dangers-of-mta.md]

4. Privacy is eroding the data MTA needs. Multi-touch attribution requires user-level tracking across devices and platforms, stitching together someone's phone session with their laptop purchase with their tablet browse. Apple's ATT framework, browser cookie deprecation, rising consent rejection rates under GDPR and CCPA. Each year, a larger share of customer journeys go dark. This isn't waiting for a technical fix. Privacy regulation is tightening globally. Browser vendors compete on privacy features. The data MTA depends on will continue to shrink.

5. Attribution windows cut off long journeys. Standard attribution windows of 7–30 days work for impulse purchases. A B2B prospect evaluating attribution software might take 90 days from first whitepaper download to demo request. A touchpoint at day 60? Zero credit, regardless of how much it influenced the decision. The longer your sales cycle, the more your attribution data lies by omission.

The question attribution can't answer: would this have happened anyway?

Here's the uncomfortable truth that the last-click-vs-multi-touch debate obscures: the real gap in marketing measurement isn't between attribution models. It's between attribution (any model) and incrementality measurement.

Avinash Kaushik, former Google Digital Marketing Evangelist, puts it in terms that are hard to misunderstand: attribution and incrementality are "chalk and cheese." Attribution distributes credit among touchpoints a converter happened to touch. Incrementality identifies conversions that wouldn't have happened without the marketing.

Think about what that means quantitatively. Kaushik's thought experiment: a company sees 10 conversions. Attribution distributes credit across every channel those customers touched. Useful for relative comparison. But incrementality asks a different question: if you switched off all marketing, how many of those 10 conversions still happen?

Kaushik's answer, across hundreds of companies: typically about 7. Three out of ten are genuinely incremental, actually caused by marketing. The other seven would have happened from brand reputation, word-of-mouth, organic search, direct traffic, and the simple momentum of existing as a company people already know. His broader estimate: true marketing incrementality typically falls between 0% and 25% of what attribution reports.

Let that land. If attribution says marketing drove 1,000 conversions, incrementality might show that only 50–250 of them were genuinely caused by marketing. The rest would have happened regardless. Multi-touch attribution distributes credit more fairly among those 1,000 touchpoints, but if 750 of those conversions weren't incremental, you're optimizing the arrangement of credit for results your marketing didn't create.

This is what "switching models" should really mean. Not swapping last-click for multi-touch. That's worth doing. But the real shift is from asking "who gets credit?" to asking "what actually caused this?" From attribution of any kind toward incrementality measurement.

Multi-touch attribution vs. marketing mix modeling: what to actually use

If attribution is flawed and incrementality is the gold standard, what should a marketing team actually do? The honest answer: it depends on what you're deciding and what you can afford to invest in measurement.

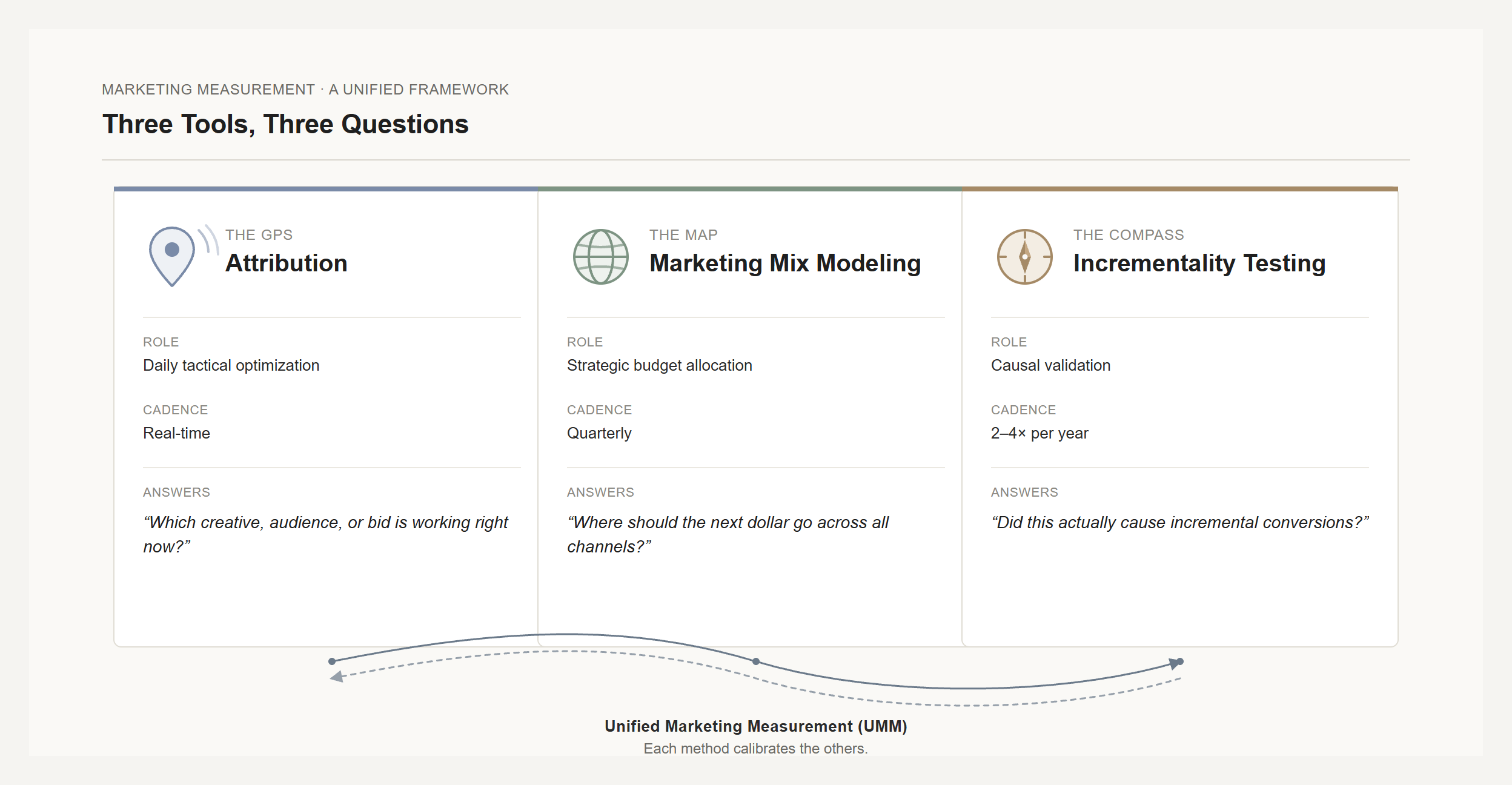

Three tools serve different roles. Think of them as navigation instruments:

Attribution is the GPS. Real-time, turn-by-turn, granular. It tells you which creative variation is performing, which audience segment is converting, where to adjust bids today. Useful for tactical optimization within channels you've already validated as genuinely incremental. Dangerous when used for strategic budget allocation. That's asking a GPS for the map.

Marketing mix modeling is the map. MMM uses aggregated historical data (spend, revenue, seasonality, competitive activity) to model each channel's contribution and diminishing returns. It sees everything attribution misses: TV, radio, brand, offline. Use it for quarterly and annual budget planning. Its limitation: it's backward-looking and needs substantial historical data, so it lags fast-moving channel changes.

Incrementality testing is the compass. It gives you causal ground truth through controlled experiments, typically geographic holdout tests where you pause a campaign in one region and compare conversion rates against a matched control. It answers the only question that matters for budget decisions: did this actually drive conversions that wouldn't have happened otherwise? Its limitation: you can't test everything continuously, so you test your 2–4 highest-spend channels and use the results to calibrate the other methods.

The three form a closed loop, sometimes called unified marketing measurement (UMM). MMM identifies where to test. Incrementality confirms what's real. Attribution optimizes execution within validated channels. Each quarter, the loop tightens and your measurement becomes more reliable. [from sources/articles/measured-incrementality-attribution-mmm-decision-tree.md]

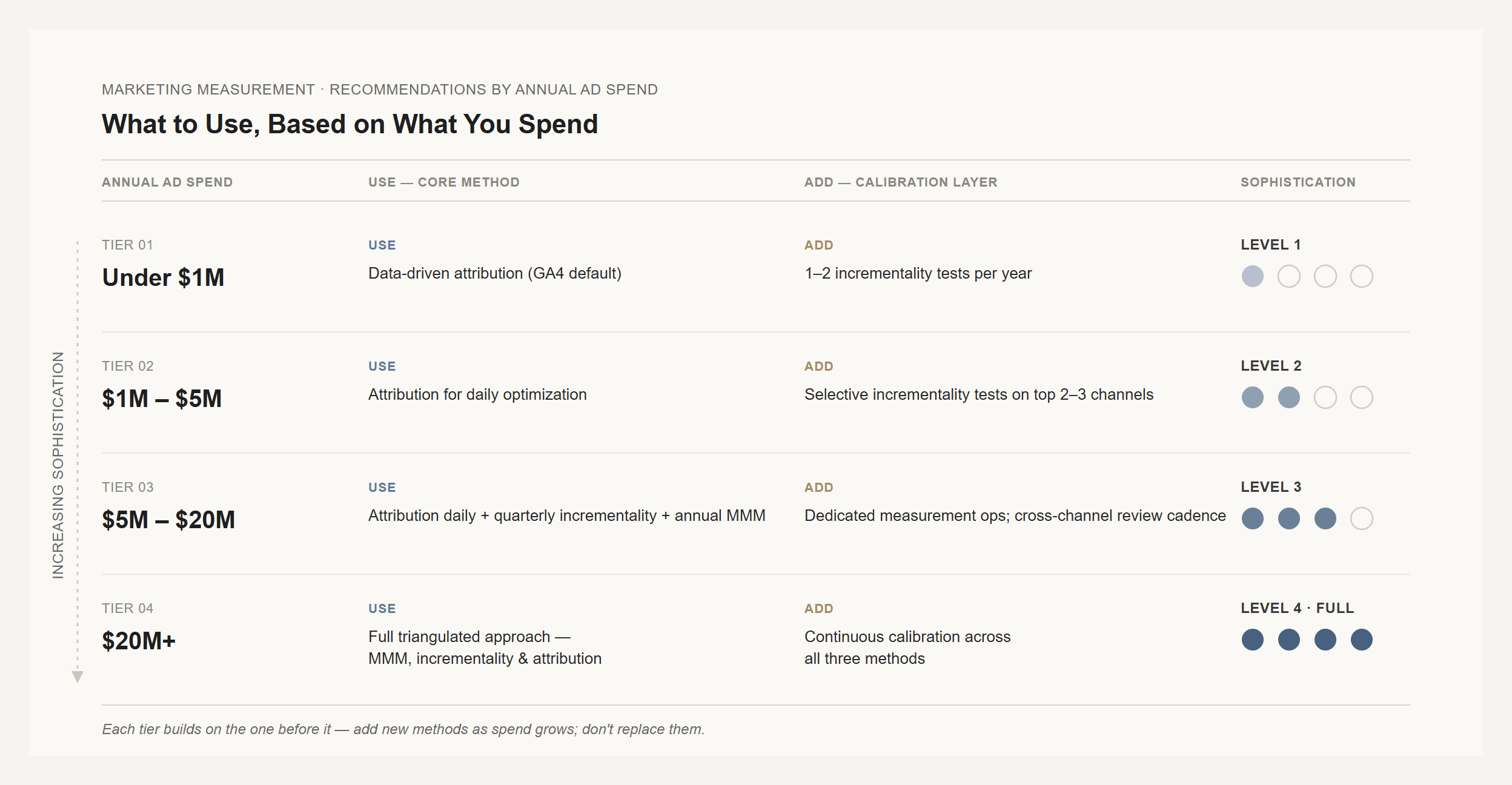

Which methods to prioritize, by spend level

Not every team needs all three. Here's a practical starting point:

| Annual ad spend | Where to start |

|---|---|

| Under $1M | Switch to data-driven attribution in GA4 (it's already the default, just verify). Run 1–2 incrementality tests per year on your highest-spend channel. |

| $1M–$5M | Data-driven attribution for daily optimization. Selective incrementality tests on your top 2–3 channels quarterly. |

| $5M–$20M | Attribution daily. Quarterly incrementality tests. Annual MMM refresh. |

| $20M+ | Full triangulated approach running continuously. MMM, incrementality, and attribution feeding each other. |

The minimum viable upgrade

If you're on last-click today and can only do one thing: run a geo-holdout test on your highest-spend channel. Pause the channel in one region for 2–4 weeks and compare conversion rates against a matched control region. The difference is the channel's true incremental contribution.

That single test will probably reveal a gap between what attribution claims and what's actually incremental. And the size of that gap will tell you how much to trust your current data.

One documented case found that reallocating just 5–10% of budget based on test-calibrated insights unlocked 5–15% incremental revenue lift in the first quarter.

Further reading

- Marketing Attribution — our full guide to attribution, incrementality, and measurement

- Multi-Touch Attribution vs. Marketing Mix Modeling — the detailed comparison

- How to Run an Incrementality Test — the practical guide

- Lead Attribution Explained — attribution for lead-gen businesses

- How to Build a Marketing Measurement Strategy — the measurement framework that sits above attribution

- Customer Experience Analytics — measuring CX across the full journey

- Avinash Kaushik, "Attribution Is Not Incrementality"

- Kohavi, Tang, and Xu, Trustworthy Online Controlled Experiments (Cambridge University Press, 2020)

Frequently asked questions

Is multi-touch attribution better than last-click?

Yes, but the upgrade is smaller than you'd expect. MTA surfaces channels that last-click made invisible, which is genuinely useful for understanding the full customer journey. But it still tracks correlation, not causation. A touchpoint that happened before a conversion gets credit whether or not it influenced the purchase. For daily campaign optimization, MTA is a real improvement. For budget allocation? You need incrementality testing to know what's actually working.

What is data-driven attribution and how is it different from rule-based models?

Data-driven attribution uses machine learning, specifically Shapley values from cooperative game theory, to distribute fractional attribution credit based on your actual conversion path data. It evaluates each touchpoint's counterfactual contribution: what happens to conversion probability when this touchpoint is present versus absent? Rule-based models (linear, time-decay, U-shaped) apply fixed formulas regardless of your data. Google's DDA in GA4 is the most common implementation. It needs at least 300 conversions and 3,000 interactions over 30 days to work reliably.

How much does switching attribution models change your budget?

Often dramatically. In one case study, switching from attribution to incrementality-based measurement revealed that paid search was overvalued 19x relative to its actual incremental contribution, while video was undervalued 11x. When the brand shifted 25% of search budget to video, they got 18% more conversions from the same spend. The pattern is consistent: lower-funnel channels (branded search, retargeting) lose credit, upper-funnel channels (display, video, content) gain it.

What is the difference between multi-touch attribution and marketing mix modeling?

MTA tracks individual user journeys across digital touchpoints and assigns credit based on those paths. MMM uses aggregated historical data (total spend, total revenue, seasonality, competitive activity) to model each channel's contribution statistically. The key differences: MTA is granular but only sees digital clicks; MMM sees all channels (including TV, radio, offline) but at a strategic, not tactical, level. MTA gives daily feedback; MMM typically refreshes quarterly. Most mature measurement programs use both, with incrementality testing to validate them.

What is incrementality testing in marketing?

Incrementality testing uses controlled experiments, most commonly geo-holdout tests, to measure the causal impact of marketing. You pause a campaign in one geographic region while running it in matched control regions, then compare outcomes. The difference is the campaign's true incremental contribution. Unlike attribution, which tracks touchpoints correlated with conversions, incrementality directly measures what would have happened without the marketing. It's the closest thing marketing has to a clinical trial, and it's the gold standard for budget decisions.

Can I use all three measurement methods together?

Not just can, you should. The recommended approach is a calibrated loop: attribution for daily tactical optimization (bid management, creative testing, audience targeting), MMM for strategic cross-channel budget allocation, and incrementality testing to validate that both are giving you accurate signals. Run incrementality tests on your highest-spend channels at least annually. Feed the results into your MMM as calibration data. Use attribution only within channels that incrementality has confirmed are genuinely incremental. Each round makes the whole system smarter.